Use this when the provider is not in the catalog. The Cloud dashboard accepts a models.dev-style JSON definition, stores the shared credential once, and then lets desktop workspaces import it.Documentation Index

Fetch the complete documentation index at: https://openworklabs.com/docs/llms.txt

Use this file to discover all available pages before exploring further.

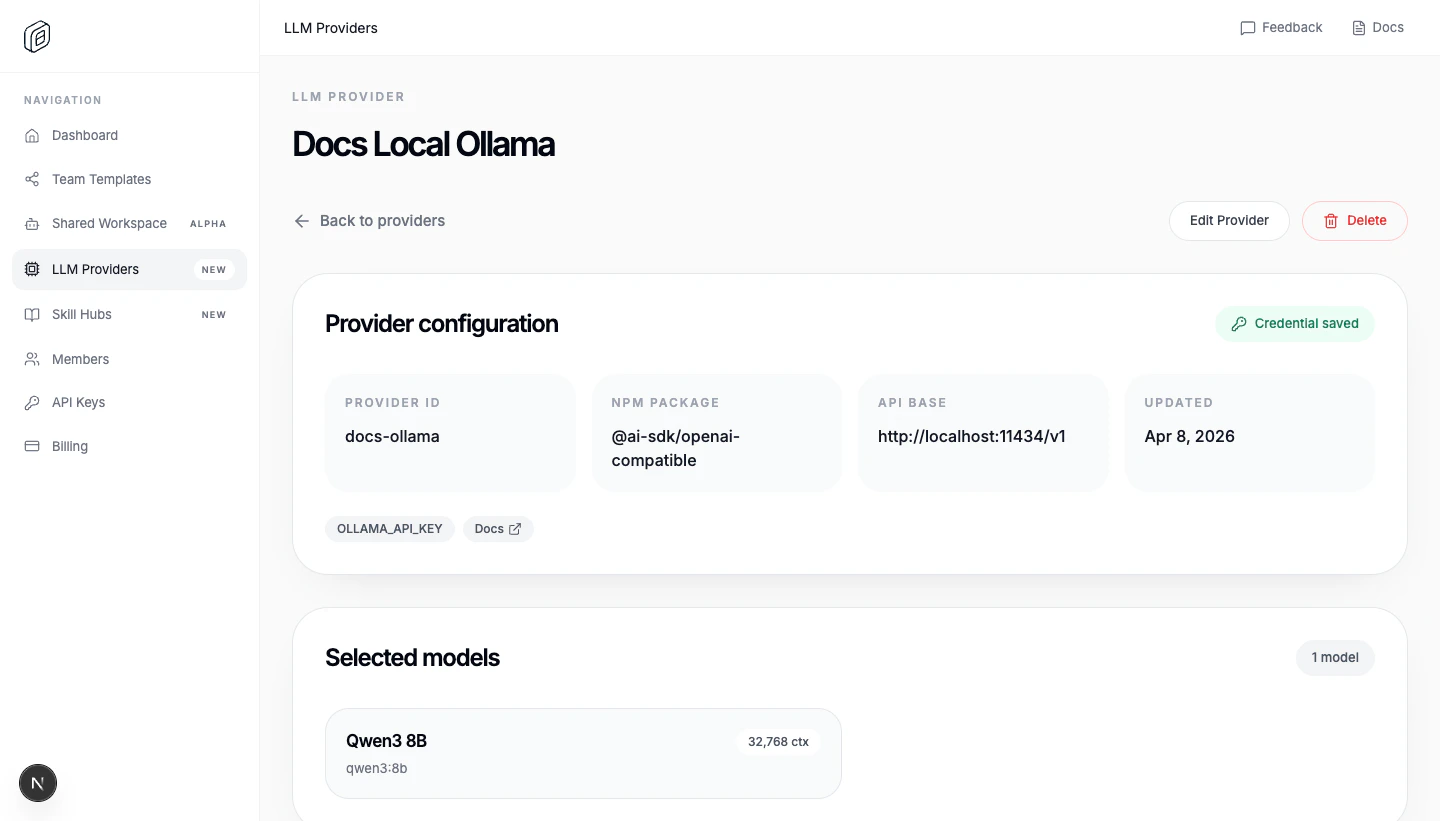

Create the custom provider

- Open

LLM Providers. - Click

Add Provider. - Switch to

Custom provider. - Paste the

Custom provider JSON. - Paste the shared

API key / credential. - Choose

People accessand/orTeam access. - Click

Create Provider.

id, name, npm, env, doc, and models. api is optional, but most OpenAI-compatible providers use it. The editor also requires valid JSON, at least one environment variable, and at least one model.

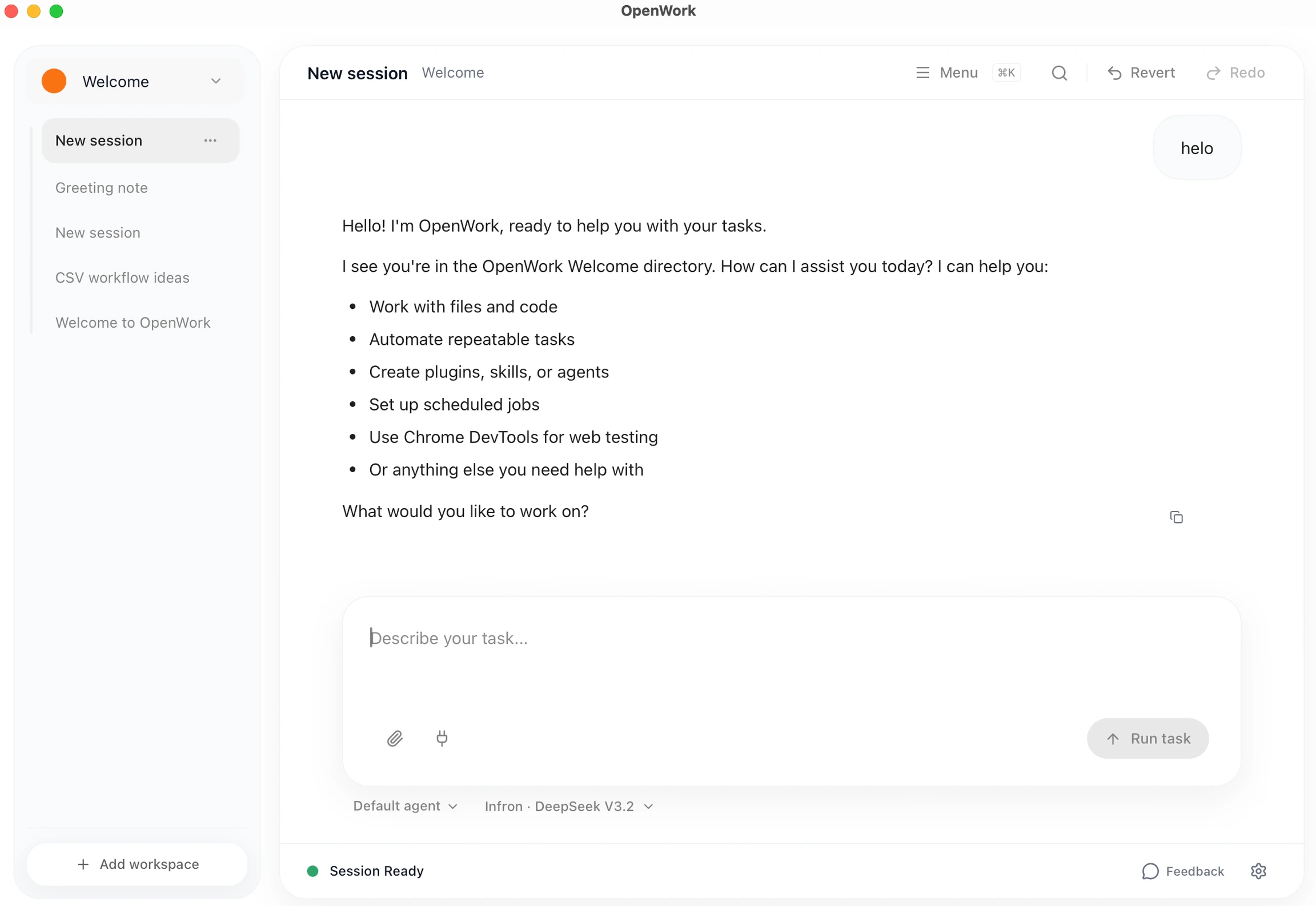

Import it into the desktop app

- Open

Settings -> Cloud. - Choose the correct

Active org. - Under

Cloud providers, clickImport. - Reload the workspace when OpenWork asks.

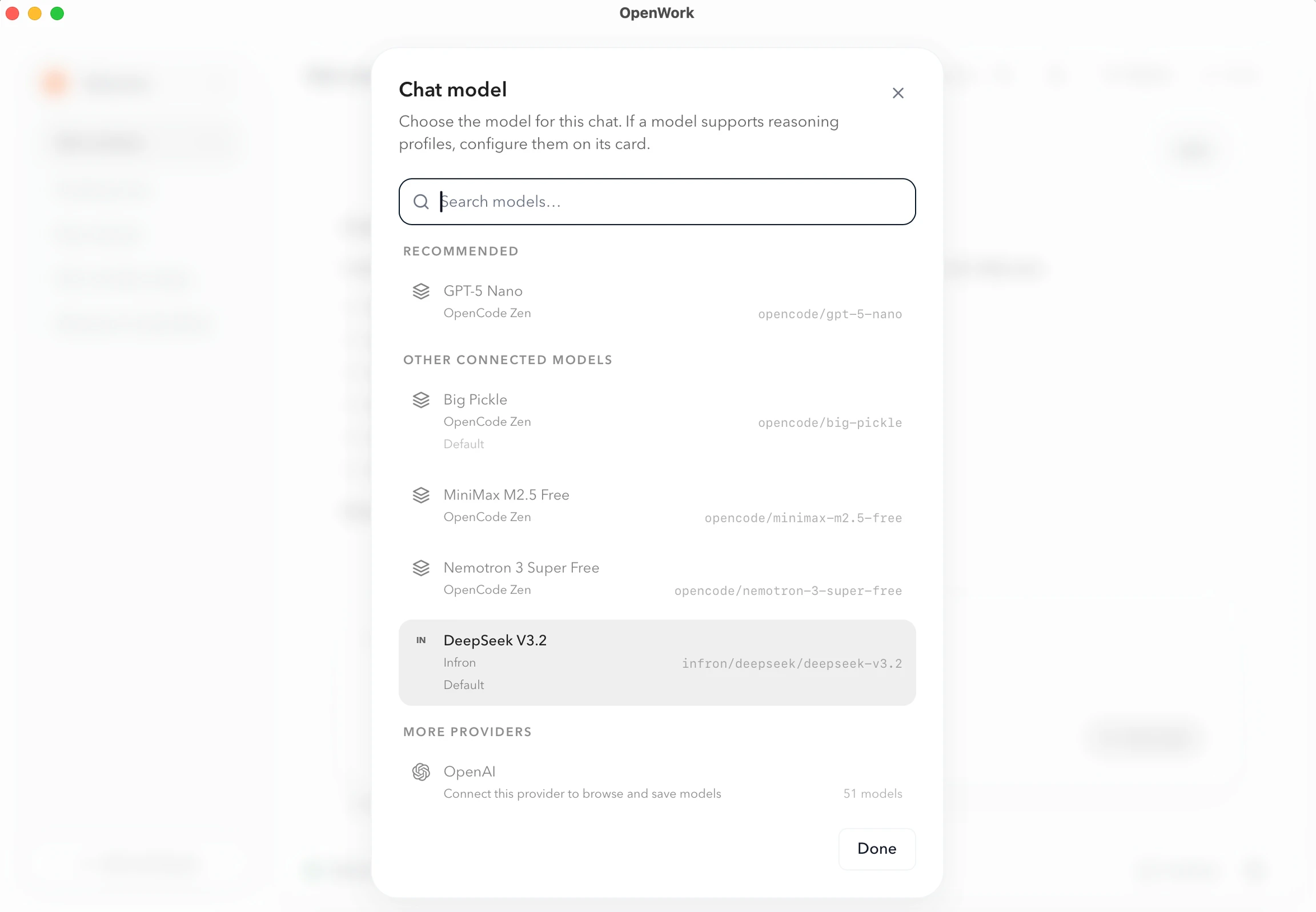

Functional example: an LLM gateway (Infron)

An LLM gateway is one OpenAI-compatible endpoint that fans out to many model providers. If you want to run the routing yourself, LiteLLM is a good starting point. Infron is the hosted option: one API key gets you every model in its marketplace with automatic provider fallbacks and a single invoice, so adding a new LLM to OpenWork is just another entry undermodels.

Grab a key from the API Keys dashboard. If you don’t have an account yet, sign up at infron.ai/login and their quickstart walks you through your first request.

Paste the key into API key / credential and use this JSON:

models to expose other routes the gateway supports (e.g. openai/gpt-5.4, google/gemini-2.5-flash).